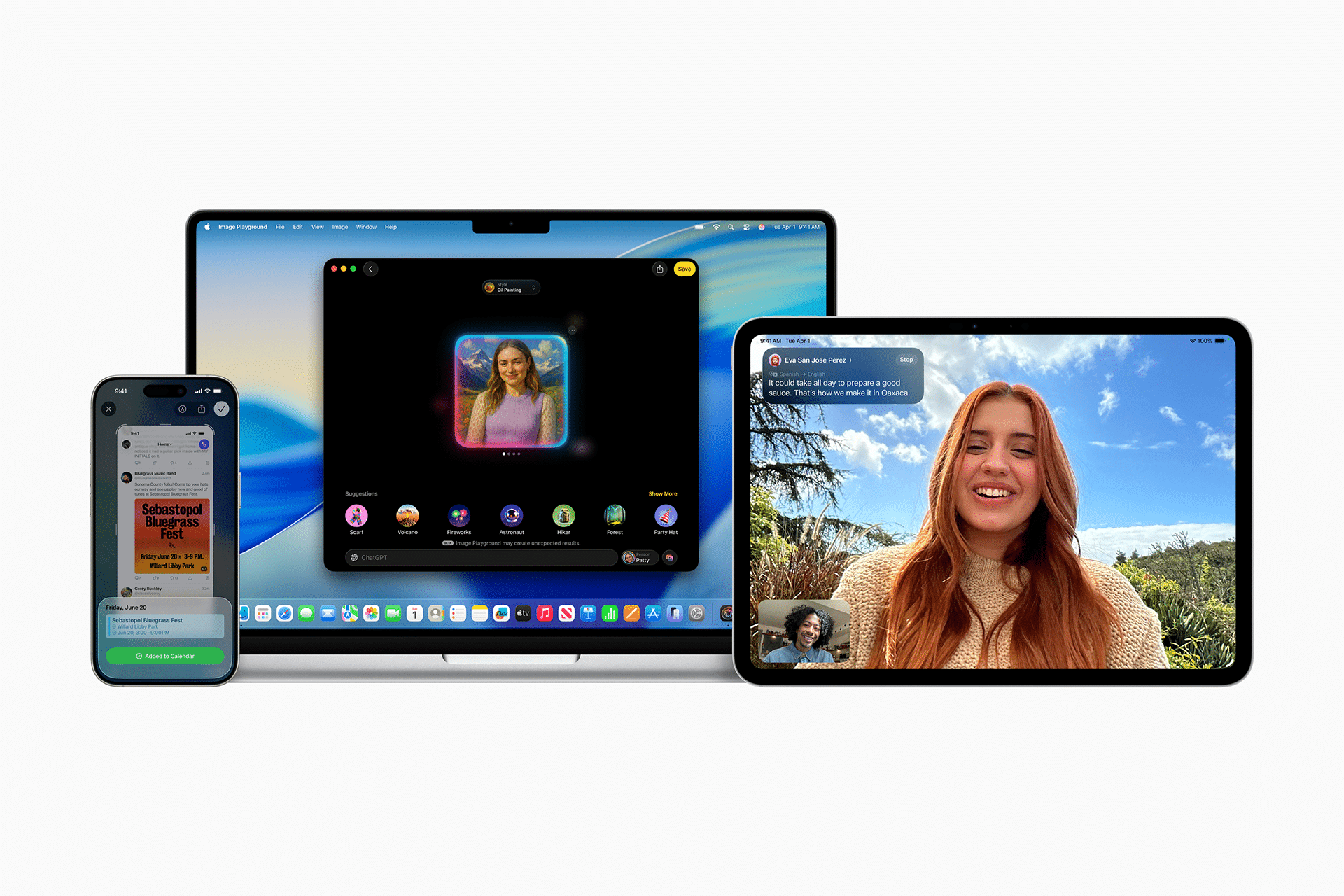

"Hey Siri, prep for tomorrow's meeting." A Siri that checks your calendar, finds relevant emails, and even creates briefing notes. This isn't sci-fi. It becomes reality in March 2026 with iOS 26.4.

What is this?

In January 2026, Apple and Google issued a joint statement. Here's the gist: Next-generation Apple Foundation Models will be built on Google's Gemini technology. In plain terms, Siri's brain is switching to Gemini.

Why is this such big news? Apple has always been the "we build everything ourselves" company. Chips, OS, apps — all in-house. Yet when it comes to AI, Apple gave up building it alone and partnered with Google. According to Bloomberg, the deal is worth roughly $1 billion per year.

Apple's AI struggles are nothing new, actually. They announced "Apple Intelligence" at WWDC 2024, promising a major Siri upgrade, but their internal hybrid architecture (legacy system + LLM) kept failing in about a third of test cases, causing repeated delays. Eventually, AI chief John Giannandrea was reassigned, and Craig Federighi (software head) and Mike Rockwell (Vision Pro lead) took over the project.

Tim Cook explained it this way: "Think of it as a collaboration. We'll continue to do our own work independently, but powering the personalized Siri is a collaboration with Google." The important thing is that from the user's perspective, nothing changes visually. No Google logo in sight. Siri is still Siri — it's just completely different on the inside.

What changes?

What was old Siri's biggest problem? Answers that ended with "Here's what I found on the web." Gemini-powered Siri is fundamentally different. Instead of parsing keywords, it's been completely rebuilt with a unified LLM core that understands context.

| Old Siri | Gemini-Powered Siri (iOS 26.4+) | |

|---|---|---|

| Comprehension | Keyword matching, simple command parsing | Context & nuance understanding, pronoun tracking |

| Tasks | Single app, single action | Multi-step, cross-app automation |

| Personal context | Almost none | References emails, messages, photos, calendar |

| Screen awareness | Not possible | Reads current screen content + takes action |

| Response speed | 1–3 seconds | Under 0.5 seconds |

| Privacy | On-device + cloud | On-device + Private Cloud Compute maintained |

Craig Federighi put it this way: "This partnership puts us in a position to deliver an even bigger upgrade than what we originally announced."

Here's what becomes possible specifically:

- "Find the email where Eric talked about ice skating" — Finds the email by context alone, no keywords needed.

- "Add this address to Eric's contact" — Reads the address from your text message screen and saves it to the Contacts app.

- "Edit this photo and put it in the Notes app" — Edits the photo, then switches to another app to complete the task.

- "What's my passport number?" — Reads the number from a passport image stored in your photos.

It's not a full chatbot yet

Siri in iOS 26.4 doesn't include long-term memory or extended conversation capabilities. True chatbot-level conversation is expected with iOS 27 (September 2026). Also, some advanced features reportedly had quality issues in internal testing and may be pushed to iOS 26.5 (May).

But why Google specifically?

Apple considered several candidates. They already had ChatGPT integration with OpenAI, and Anthropic (Claude) was also in the mix. But the final choice was Google. Here's why:

- Technical capability: Gemini 3 matched or outperformed GPT-4.5 on benchmarks

- Infrastructure: Decades of Search and YouTube infrastructure, custom AI chips (TPUs), billions of queries processed

- Privacy: Architecture compatible with Apple's Private Cloud Compute

- Existing relationship: Already exchanging billions of dollars annually through the Safari default search engine deal

- Cost efficiency: More efficient cost structure compared to OpenAI

As a result of this deal, OpenAI's ChatGPT mobile market share dropped from 69.1% to 45.3%, while Gemini surged from 14.7% to 25.1%. Fortune analyzed the deal as "a signal that Google has seized the momentum in the AI race."

The essentials: how to get started

- Check your iPhone compatibility

You need iPhone 15 Pro or later. Some features may only be partially supported on older models. - Update to iOS 26.4

Expected to launch in March 2026. Go to Settings → General → Software Update to get it. - Enable Apple Intelligence

Go to Settings → Apple Intelligence & Siri to turn it on. Initially, all features work when the language is set to English (US). - Test simple multi-step commands

Try requests with two or more steps like "Check my meeting schedule for tomorrow and find related emails." You'll immediately feel the difference from old Siri. - Use cross-app automation

Try cross-app commands like "Edit this photo and put it in the Notes app." Siri handles tasks across apps seamlessly.

Developers: prepare for App Intents

Third-party apps can participate in Siri's cross-app automation too. By exposing your app's actions through Apple's App Intents framework, users can invoke your app's features by voice.