You get to work and there's a PR waiting. Overnight, AI scanned your codebase for untested code paths, wrote tests, and opened a draft PR. Santiago (svpino) called this the "#1 skill for developers in 2026" — automate everything you can using AI.

What Is This?

Nightly AI test automation is exactly what it sounds like: while you sleep, AI scans your codebase, finds code paths without tests, generates test code, verifies the tests pass, and opens draft PRs. svpino calls it "nightly automation" — the key idea is that AI handles the repetitive parts first, and humans just review in the morning.

Two things made this possible. First, AI coding agents (Claude Code, Cursor, Copilot) have reached a practical quality level for test code generation. Second, running nightly automations via cron schedules in CI/CD tools like GitHub Actions is already standard practice. Tools like Claude Code Routines now support schedule triggers natively, so your automation runs in the cloud even when your laptop is closed.

Specialized tools like Diffblue Cover automatically generate Java unit tests and commit them whenever a PR is opened. TestSprite goes further with autonomous testing and self-healing for AI-generated code. "AI writes your tests overnight" isn't science fiction anymore.

Why "nightly"?

During the day, developers push code. Overnight, CI has enough time to analyze full coverage. By morning, AI-generated PRs are in your review queue, so you start the day reviewing instead of writing test code. It's about shifting synchronous work to async.

What Changes?

Writing tests has always been the #1 "should do but keep postponing" task for developers. After writing features, shipping is urgent, so tests get deprioritized and coverage drops. Nightly automation structurally breaks this cycle.

| Traditional | Nightly AI Automation | |

|---|---|---|

| When tests are written | Manually after feature dev (often skipped) | Every night, untested code auto-detected → generated |

| Coverage trend | Declines over time | Small daily increases (compounding) |

| Developer burden | Write + maintain test code | AI drafts, humans review |

| Feedback loop | Days to weeks after merge | Next morning via draft PR |

| Incident response | Add tests after bugs | Preemptive coverage before bugs |

David Proctor at Trilogy AI describes this as the "incident → AI analysis → test generation → CI" loop. When an incident happens, you feed the stack trace and recent diff to an AI, it proposes tests that would have caught the bug, and those tests join CI. Over time, your test suite becomes a history of past failures encoded as tests.

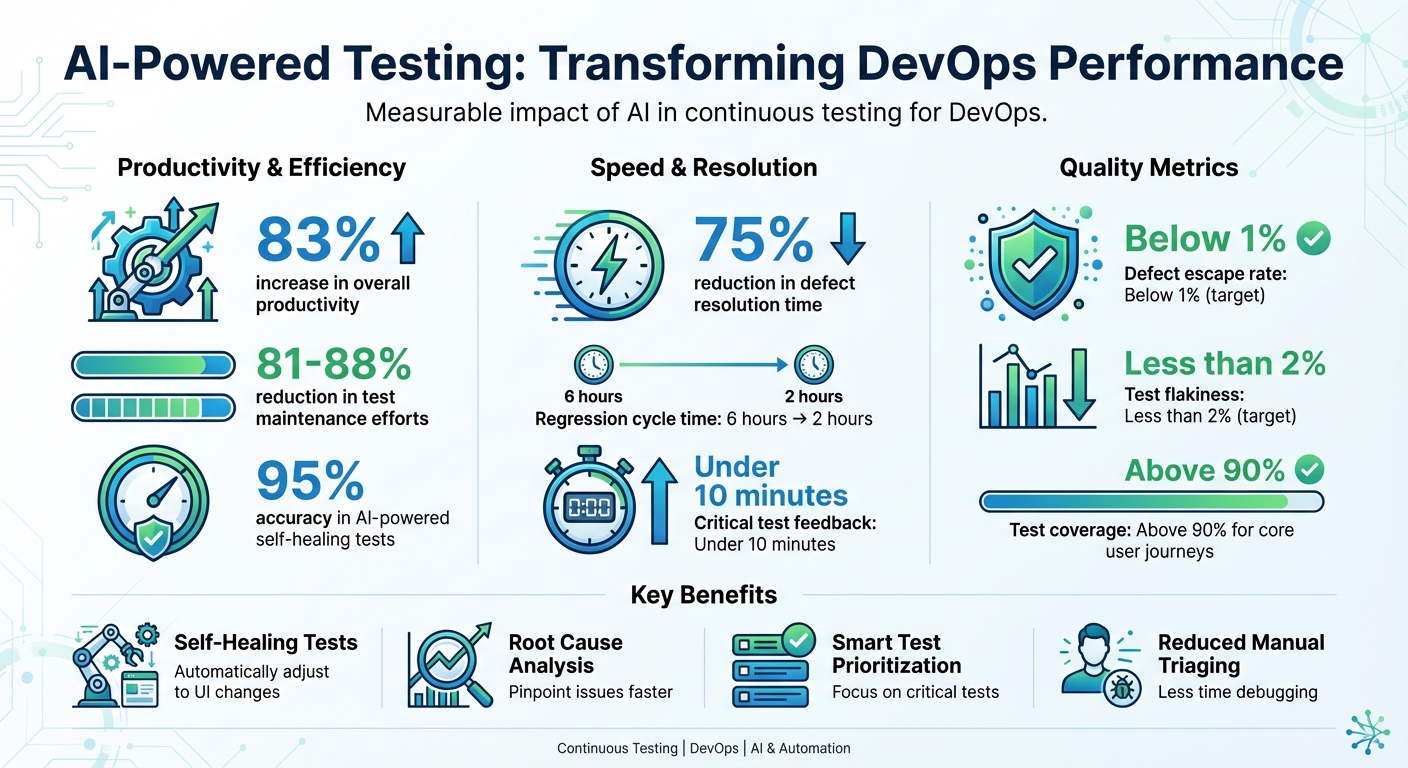

Gen AI testing tools take it further. Self-healing automatically fixes test locators when UI changes, and risk-based testing decides which tests to prioritize based on code changes. Testing is shifting from "write it then fix it when it breaks" to "AI maintains it for you."

Getting Started: Nightly AI Test Automation

- Create a coverage baseline

First, measure your current test coverage. Addjest --coverage,pytest --cov, or Cobertura XML reports to CI. AI needs this to know what's missing. - Set up a nightly cron workflow

Create a GitHub Actions workflow withschedule: - cron: '0 2 * * *'(daily at 2 AM). Run a script that parses the coverage report and extracts untested files/functions. - Connect AI test generation

Feed the untested code to AI. Claude Code Routines supports schedule triggers for automatic execution, and Diffblue Cover plugs directly into GitHub Actions. You can also script Cursor or Copilot CLI calls. - Validate generated tests → draft PR

Run the AI-generated tests to verify they pass, commit only passing tests to aclaude/prefixed branch, and open a draft PR. Log and skip any failures. - Build a morning review routine

Developers review AI-generated draft PRs each morning, tweak if needed, and merge. The key mindset: treat AI-generated tests like drafts from a tireless junior engineer who needs review.

Don't blindly trust AI tests

AI-generated tests are like a "tireless junior engineer." Fast, but not infallible. Always review for business logic correctness. Authentication, payments, and data migrations are especially risky areas to rely on AI tests alone.