From writing code to judging code. What would you do if this shift has already begun?

Addy Osmani, Google Cloud AI Director and former Chrome Engineering Lead, made a provocative statement at JS Nation US 2026: "In 2026, senior developers are nothing more than highly-paid code editors." This isn't a put-down. It's actually the paradox that defines the core competency this era demands.

What Is This?

Since 2025, Addy Osmani has been consistently identifying the realistic limits of AI coding through his "70% problem" and "80% problem" series. AI gets you 70–80% of the way, but the remaining 20–30% — quality, coherence, and last-mile work — still belongs to humans.

In this interview, he goes further. The developer's core role is shifting from "the person who writes code" to "the person who evaluates and edits code." Why does this feel like an identity crisis rather than just a role change?

Because we've believed for too long that "great coder = great developer." In reality, architectural judgment, business context understanding, and team direction-setting were always more important — AI acceleration has just made that visible now.

The data is clear. In the 2025 Stack Overflow Developer Survey, 84–90% of developers already use AI coding tools, with 51% using them daily. Claude Code reached a billion-dollar annualized run rate within just six months of full release. AI pair programming is now table stakes, not competitive advantage.

What Changes?

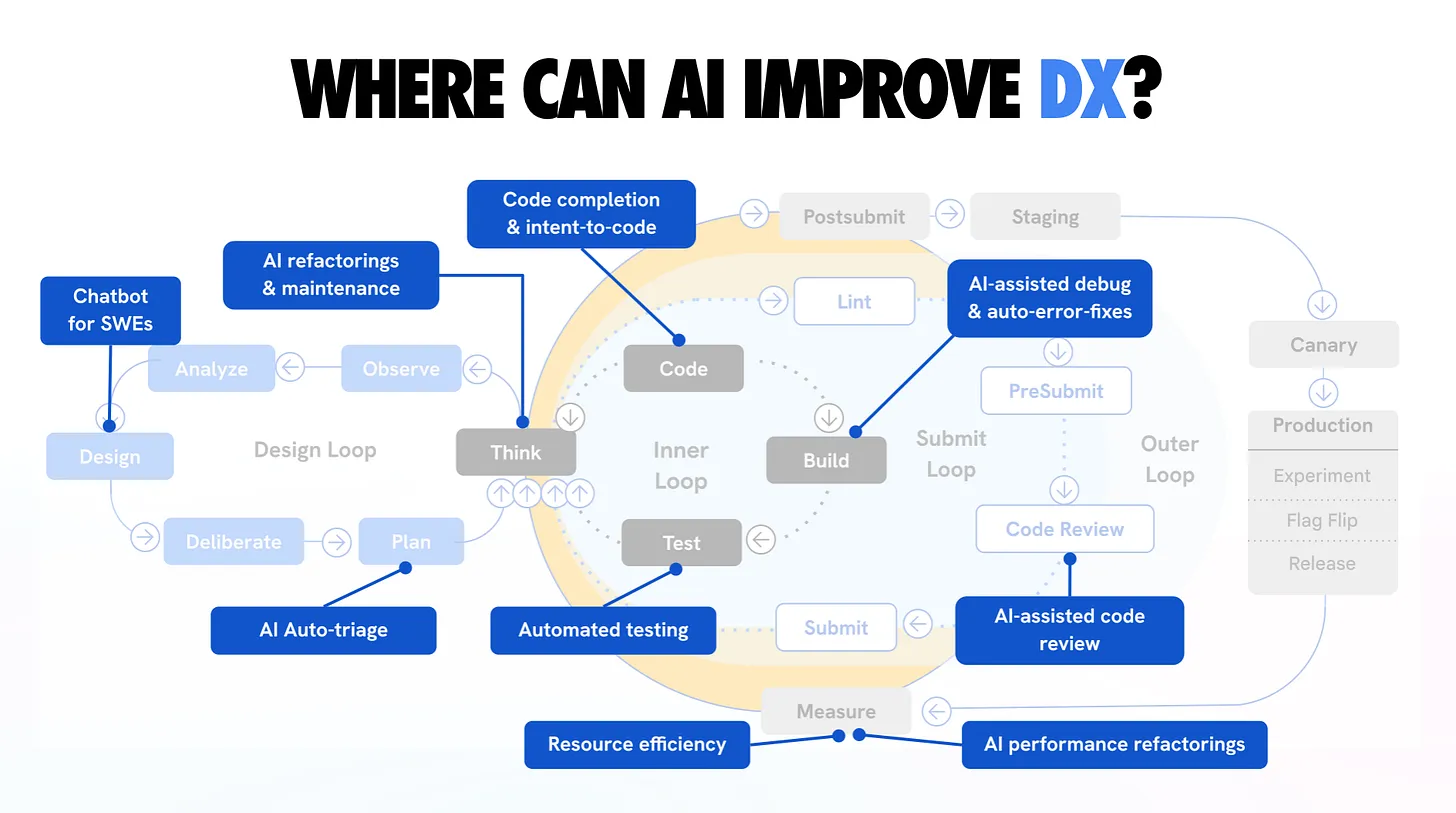

The most direct change is role redefinition. It's not just about "developing faster" — it's about what you judge and where you focus your attention.

| Traditional Senior Role | AI-Era New Role | Importance |

|---|---|---|

| Writing code directly | Evaluating and editing AI output | Core role shift |

| Memorizing syntax and libraries | Context engineering | Essential new skill |

| Implementing PRs yourself | Orchestrating agents | Productivity multiplier |

| Code review for junior education | Critical analysis of "why AI chose this" | Pedagogy shift |

| Code completeness = output | System coherence + architecture = output | Evaluation criteria change |

Osmani uses the phrase "I want PRs, not issues." He walks, assigns 3–4 tasks to agents via the GitHub app, and comes back to review PRs. This is the new reality.

According to Annie Vella's research, 77% of developers report spending less time writing code directly. GitClear's 2025 analysis found that copy-pasted code increased 17.1% as AI code generation tools became widespread, and the rate of code being revised within two weeks rose 26%. Rapidly generated code is not necessarily good code.

This is exactly where senior developer value gets redefined. "Comprehension debt" — someone who can actually understand and take responsibility for AI-generated code. Senior developers deploy AI-generated code to production 2.5x more than juniors — not because they're better at prompting, but because their judgment for validation and integration is stronger.

How to Start: The Practical Workflow

Here's Addy Osmani's step-by-step LLM coding workflow — an "AI-assisted engineering" approach, distinct from vibe coding.

- Spec before code

Don't start with vague requests. Have the AI iteratively ask you questions about requirements, compile results into aspec.md. Do a "15-minute waterfall" to align before generating any code. - Break into small chunks

Requesting everything at once produces inconsistent code. Go "Implement Step 1 → validate → Step 2." Run tests and commit after each step. - Provide extensive context

AI output quality is proportional to context quality. Include relevant files, constraints, preferred patterns, and pitfalls to avoid. Tools like gitingest or repo2txt help package your codebase. - Verify without compromise

AI code can be confidently wrong. Treat every AI-generated snippet like a junior developer's code — always run tests. Asking a second AI (different model) to review is also effective. - Commit = save points

When working with AI, commit more frequently. If the AI's next suggestion goes off track, you need a recent checkpoint to revert to. "Complete task → test → commit" is the rhythm. - Rules files to guide the AI

Create CLAUDE.md, .cursorrules, or similar rules files. Specify your coding style, forbidden patterns, and preferred libraries so the AI behaves like a trained team member.

"Understanding the fundamentals remains a superpower. The extreme view that AI makes programming languages irrelevant is wrong. Deep technical understanding is what lets you properly evaluate and integrate AI output."

Further Reading

My LLM coding workflow going into 2026 Addy Osmani's own practical LLM workflow guide — from spec writing to agent usage to version control, systematically laid out. addyosmani.com

The Engineer Identity Crisis: When AI Codes Better Than You "53% of senior developers believe LLMs code better than most humans" — real data on the identity crisis and adaptation strategies. becomingagentic.ai

The Software Engineering Identity Crisis Annie Vella's research-based analysis. Explores the roots of the developer identity crisis through historical context — why we staked our identity on coding. annievella.com

From Writer to Director: The Developer Identity Crisis of 2026 "I finished a feature in 90 minutes and felt like a fraud" — a developer's firsthand account of the identity transition. medium.com