OpenAI shipped a million-line codebase with zero lines written by a human. The secret? They handed the code to agents — but first, they designed the environment those agents would work in.

What Is It?

A harness is what you use to control a horse — reins, saddle, bit. It's a set of tools for directing something powerful that would otherwise go wherever it wants. AI coding agents need exactly the same thing.

Harness Engineering is an emerging discipline focused on designing the environment, constraints, and feedback loops that AI agents operate within. If prompt engineering is about "what to tell the agent," harness engineering is about "what world to put the agent in."

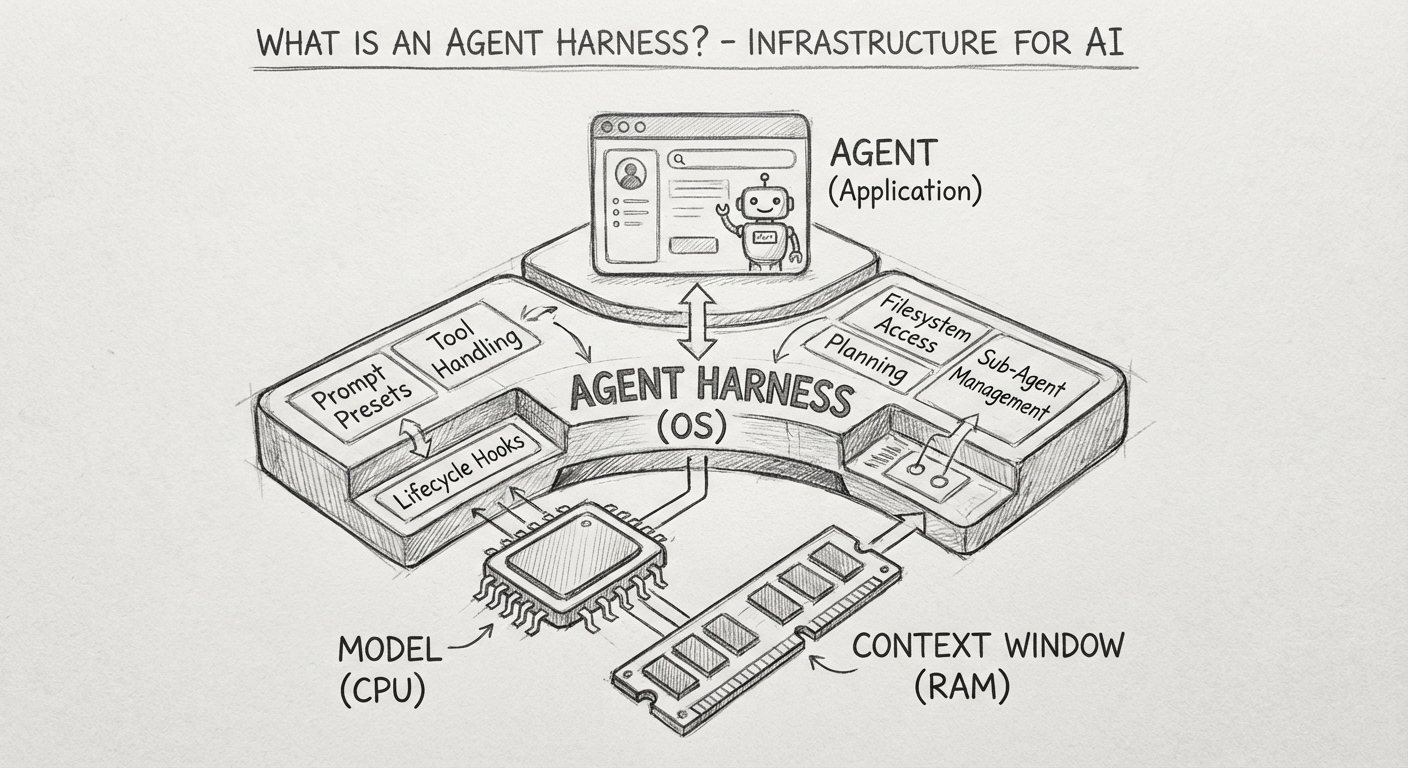

Philipp Schmid (formerly of Hugging Face) frames it as a computer analogy: if the model is the CPU and the context window is RAM, then the harness is the operating system. It manages context, handles boot sequences (prompts and hooks), and provides standard drivers (tool handling).

Here's the thing — this isn't just theory. The OpenAI Codex team wrote over a million lines of code with agents over five months, with three engineers merging an average of 3.5 PRs per day. What those engineers actually did wasn't write code — it was design the harness. LangChain kept the model the same, changed only the harness, and jumped from Top 30 to Top 5 on a benchmark.

Key Takeaway

Prompt engineering = crafting effective instructions for a single interaction

Context engineering = optimizing what information the model sees

Harness engineering = designing the entire agent system — environment, constraints, feedback, and lifecycle

The Building Blocks: Commands, Skills, and Hooks

@_petercha's vibe coding glossary series does a great job laying out these components. Using Claude Code as the reference, here are the three core tools that make up a harness.

What Changes?

You've probably seen what happens when you run an agent without a harness. It drifts in the wrong direction, repeats the same mistakes, and forgets your early instructions once the context gets long enough. It doesn't matter how good the model is — without a harness, you're working against yourself.

| Without a Harness | With a Harness | |

|---|---|---|

| Direction | Prompt-dependent, drifts often | Auto-corrects via constraints and feedback |

| Quality consistency | Different results every time | Enforced by architecture rules + linters |

| Long-running tasks | Drifts after ~50 steps | Context compression + subagent distribution |

| Team collaboration | Every developer does it differently | Shared harness keeps the whole team aligned |

| Scalability | Constant human intervention required | Autonomous operation → humans just review |

According to NxCode's breakdown, harness engineering rests on three pillars:

- Context Engineering

Making sure the agent sees the right information at the right time. Use AGENTS.md as a table of contents, not an encyclopedia — put the detailed docs in a structureddocs/directory. - Architecture Constraints

Instead of telling the agent to "write good code," you mechanically enforce what good code looks like. Dependency direction, naming conventions, and file size limits get verified by linters and CI. - Entropy Management

AI-generated code drifts over time. Run periodic "cleanup agents" that scan for documentation inconsistencies, pattern violations, and dead code.

3 Real-World Harness Frameworks Compared

Enough theory — here's a look at open-source harness frameworks you can actually use today.

| Framework | Core Philosophy | GitHub Stars | Supported Agents |

|---|---|---|---|

| Superpowers | TDD-driven autonomous dev workflow | 76.5K | Claude Code, Codex, Cursor, OpenCode |

| Oh-my-claudecode | Teams-first multi-agent orchestration | 9.2K | Claude Code (+ Codex, OpenCode variants) |

| Ouroboros | Socratic intent extraction, oscillation prevention | 1.1K | Claude Code |

Superpowers, built by Jesse Vincent, is an agentic skills framework. Its standout feature is enforced TDD — the agent must write tests before writing any code, then follow the red → green → refactor cycle. Install it and you get a full automated workflow: brainstorming → design → planning → subagent execution → code review → branch cleanup.

Oh-my-claudecode, built by Korean developer Yeachan Heo, structures 21 specialized agents — code reviewer, debugger, architect, and more — to collaborate like a team. It comes in a whole family: oh-my-claudecode for Claude Code, oh-my-codex for Codex, and oh-my-openagent for OpenCode.

Ouroboros, built by Q00, follows the philosophy of "Stop prompting. Start specifying." It uses Socratic questioning to draw out the user's real intent, then tracks ambiguity (Ambiguity Score) and agent confusion (Oscillation) as numeric metrics — so you can actually see when your agent is going in circles.

Getting Started

- Start with CLAUDE.md (or AGENTS.md)

Document your project's architecture, coding conventions, and directory structure — in 100 lines or fewer. Table of contents, not encyclopedia. - Set deterministic rules with Hooks

In.claude/settings.json, add a PostToolUse hook to auto-run linting, and a Stop hook to send a completion notification. - Install a harness framework and try it out

If you're on Claude Code, one line does it:/plugin install superpowers. TDD workflow kicks in automatically. - Add constraints gradually

Don't try to build the perfect harness on day one. Start with basic linting, add architecture constraints once you see patterns, and bring in subagents only when you actually need them.

Heads Up: The over-engineering trap

Philschmid's key advice: "Build to Delete." Models improve fast — what needed a complex pipeline in 2024 might be a single prompt in 2026. If you over-engineer your control flow, the next model update can break your whole system.